Designing a Unified Log Platform on Azure

I have been working as App/Infra Solution Architect with Microsoft from 5 years. Helping diverse set of customers across vertical i.e. BFSI, ITES, Digital Native in their journey towards cloud

Introduction:

Recently i have seen similar requirement around many enterprises specially from FSI on centralise log store and analytics solution across thier IT infrastructure

In the modern enterprise, IT infrastructure is a complex tapestry woven from on-premises systems, private clouds, and multiple public cloud environments. For large organizations, particularly those in heavily regulated sectors like financial services, managing the sheer volume and diversity of machine-generated logs is not just an operational hurdle – it's a fundamental business requirement for maintaining security, ensuring compliance, and driving performance.

As a financial institution faces stringent regulatory requirements for log retention, security monitoring, and compliance reporting. The current log volume exceeds 100TB daily, creating significant challenges for storage, retention, and cost optimization.

Key Challenges:

The complexity and scale of a large enterprise company Infrastructure estate, as observed within our financial sector customer, present significant challenges for traditional or decentralized log management approaches:

Massive Data Volume: Handling the ingestion, processing, and storage of hundreds of terabytes (100TB+) of log data daily requires a platform built for scale and efficiency from the ground up.

Diverse Log Sources: Logs originate from a heterogeneous mix of operating systems (Windows, Linux, Unix. Solaris, Mainframes), network devices, applications, security tools, and cloud services (Azure, AWS, GCP), each generating log data in different formats. Normalizing and analyzing this disparate Logs is complex.

Compliance Requirements: Financial institutions face stringent regulations (e.g., SOX, PCI DSS, GDPR, and local financial laws) mandating specific log retention periods (often multi-year), data immutability, audit trails, and strict access controls. Meeting these across a distributed IT environment is critical.

Cost Management: Storing and analyzing large volumes of data for extended periods can be prohibitively expensive. Optimizing storage costs while maintaining compliance and analytical capabilities is a key concern.

Security Analytics: Effectively detecting and responding to increasingly sophisticated cyber threats requires aggregating security-relevant logs from all sources and applying advanced analytics and threat intelligence in near real-time.

Performance Troubleshooting: Quickly identifying the root cause of performance issues across complex application stacks and infrastructure components relies on having centralized, searchable, and correlated performance logs.

Operational Efficiency: Managing decentralized log collection, storage silos, and disparate analysis tools leads to high operational overhead and hinders rapid insights.

Expectation:

Architecture Overview:

Data Collection Options:

Able to collecy logs across all diverse source is first major problem ,as it includes majority of physical security/network specific applainces even many legagcy operating system

In this solution depending types of sources different log collection method would be used, following are list of log collection options available which can be integrated with ADX

Agent based

Azure Monitor Agent

When one would use AMA:

For Windows/Linux VMs (on-prem or cloud) where you need native Azure integration. Collect Windows Event Logs, Syslog, Performance Counters, IIS logs. Best for time-series telemetry and routing to Log Analytics or ADX via Data Collection Rules (DCR). Ideal for VM-centric monitoring and hybrid environments (with Azure Arc).Fluent-bit

When Should you use Fluentbit

For containers (AKS/Kubernetes), IoT edge, or custom application/system logs. When you need lightweight, high-performance log forwarding with rich parsing (JSON, regex, multiline). Supports multiple outputs (Event Hubs, ADX, Blob, OTLP). Great for streaming pipelines and edge scenarios.

Fluent Bit started as a log processor and forwarder, and that’s still its sweet spot. It can now handle metrics and even output in OTLP, but its core strength is fast, lightweight for logs pipelines.

Flexible:It supports a huge range of outputs— Kafka, Loki, ADX, Blob, OTLP/HTTP, and moreOTEL Collector

It natively handles traces, metrics, and logs across receivers, processors, exporters, connectors and with multi-signal observability—handling logs, metrics, and traces in a unified pipeline.When should you use OTEl Collector

When you need multi-signal observability: logs + metrics + traces in one pipeline. For microservices, distributed apps, or multi-cloud environments. Provides advanced processors (sampling, filtering, redaction) and vendor-neutral OTLP. Scales well for complex observability pipelines and integrates with Azure Monitor, ADX, or Event Hubs.

Agents OS Supportiblity Matrix

| Collector | Linux | Windows | macOS | FreeBSD | Solaris | AIX | Remarks | OS-specific limitations |

| OpenTelemetry Collector | ✔ Official DEB/RPM/APK packages and Docker images | ✔ MSI & ZIP builds | ⚠ Build from source; no official binary | ✖ Unsupported – build failures reported | ✖ Not supported | ✖ Feature request open; no builds yet | First-class support only for Linux (x86-64/ARM64/PPC64-le) and Windows; multi-signal pipeline (logs + metrics + traces). | - macOS: manual Go tool-chain build, no CI. |

| - FreeBSD: known compilation errors. | ||||||||

| - Solaris/AIX: no upstream support; cannot build some Go deps. | ||||||||

| Fluent Bit | ✔ RPM/DEB/Alpine packages & Docker images | ✔ Native installer & Chocolatey | ✔ Homebrew / tar.gz | ✔ FreeBSD ports/pkg | ⚠ Source build only; some plugins absent | ⚠ Source build possible; no upstream packages | Very small C binary; wide plugin ecosystem for logs; OTLP input/output available. | - Solaris/AIX: must compile; verify needed plugins compile. |

| - FreeBSD: some inputs/outputs may be missing. | ||||||||

| Azure Arc Agent (Connected-Machine + Log Analytics) | ✔ Ubuntu, RHEL, SLES, CentOS, Amazon Linux, Oracle Linux | ✔ Windows Server 2008 R2/2012 R2 and newer (incl. Core) | ✖ Not supported | ✖ Not supported | ✖ Not supported | ✖ Not supported | Purpose-built for Azure governance/monitoring; ships only for Windows & mainstream Linux. | - Linux: limited to listed distros; requires systemd & kernel ≥ 3.10. |

| - Windows: only server SKUs; no Windows 10/11. | ||||||||

| - All other Unix variants unsupported. |

Log Streaming

Even Hub(Kafka API) :

When one should use Event Hub?

High-throughput streaming of logs, metrics, or events from multiple producers. When you need buffering, replay, and decoupling between data producers and consumers. Ideal for real-time pipelines where logs flow from Fluent Bit or OTel Collector to downstream analytics (ADX, SIEM, Data Lake). Use Kafka API when existing tools or agents already speak Kafka protocol.Why use Event Hub (Streaming)

Flexiblity: Log sources only need to know about kafka stream; backend teams can swap underline Data/Log Warehouse on Azure without touching edge agents

Durability:Never lose data – logs are written to disk and replicated, so they survive node failures and can be replayed after outages

Schedule Ingestion

- Az Data Factory(Batch Jobs):

When one should use ADF?

For batch ingestion of log files from on-premises or multi-cloud environments. When logs are stored as CSV, JSON, Parquet, or other file formats and need scheduled or event-driven movement. Best for legacy systems or offline sources where real-time streaming isn’t possible. Provides secure hybrid connectivity and broad connector coverage.

Log Collection options :

Azure native solution provide comprehensive capablities in different type log Injestion from diverse log sources

Windows Servers

Windows Event logs (System, Application, Security)

Performance counters (CPU, Memory, Disk, Network)

IIS logs for web servers

SQL Server logs and metrics

Custom Windows logs and ETW events

Application-specific logs

Linux Servers

Syslog messages (all facilities and priorities)

Performance metrics (CPU, Memory, Disk, Network)

Custom log files with configurable parsing

Journal logs from systemd-based systems

Container logs (Docker, Kubernetes)

Application-specific logs (Apache, Nginx, MySQL, etc.)

AIX/Unix/Solaris Systems

Syslog forwarding to collection servers

Custom connectors for specialized Unix variants

Script-based collection for legacy systems

Direct integration with Event Hubs

API-based collection methods

Log file exports via SFTP/SCP

Network Appliances

Flow logs for traffic analysis

SNMP traps and metrics

Syslog collection from network devices

Azure Network Watcher integration

NSG flow logs

API-based collection from vendor platforms

Firewall Devices

Security events and alerts

Traffic flow logs

Rule match logs

Administrative audit logs

Threat intelligence feeds

VPN connection logs

Cloud Services

Native Azure Services logs

Azure Active Directory logs

Microsoft 365 Defender logs

Azure PaaS service logs

3P Cloud Platform Services logs

SaaS Platform

Microsoft M365 Services

Salesforce

Qualys

ZScaler

Ingestion Patterns

Legacy Systems (Unix/AIX/Solaris/CustomOS)

Configure Unix/Solaris systems to forward syslog messages to a central syslog server:

Deploy Linux servers with Fluentbit/Otel Collector as syslog collectors

Configure syslog forwarding on Unix/Solaris systems to Fluentbit forwader server

Using Fluentbit filters to reduced noise from all logs being ingested

Parsed Raw log data into stuctured format created for on ADX for syslog table

Windows/Linux/FreeBSD Server

Option A — AMA (Azure Monitor Agent)

Best for: Windows Events, Syslog, Performance Counters (time‑series), IIS logs, “VM observability first”.

Pattern

Onboard servers to Azure Arc (for hybrid) or use native Azure VMs.

Deploy AMA and configure Data Collection Rules (DCR):

Windows: WindowsEvent, IIS (custom text), Perf counters

Linux: Syslog, Perf counters

Route to Log Analytics; optionally export or data connect to ADX for advanced KQL and long‑term analytics.

Option B — Fluent Bit (lightweight log shipping + rich parsing)

Best for: Containers/AKS, edge, file/journald/syslog logs with multiline/regex parsing; routing to Event Hubs (Kafka API) → ADX.

Pattern

Install Fluent Bit on Windows/Linux/Unix Servers

Collect logs: journald/systemd, syslog, file tail (IIS, App logs).

Apply filters (multiline,

parser,modify,lua/wasmif needed).Output to **Azure Data Explorer or Event Hub (Kafka API)**or buffering and replay.

ADX ingests from the Event Hub (data connection + JSON mapping).

Option C — OpenTelemetry Collector (multi‑signal pipelines)

Best for:Logs + Metrics + Traces together (microservices, distributed apps), standard OTLP, centralized processing (sampling, filtering, redaction).

Pattern

Run OTel Collector as a service/sidecar/DaemonSet.

Receivers:

filelog(app logs),syslog,hostmetrics/windowsperfcounters,otlp(SDKs).Processors:

batch,attributes,memory_limiter, optionaltransform/redaction.Export:

Kafka exporter → Event Hubs (Kafka API) → ADX (logs)

azuremonitor exporter → Azure Monitor / Application Insights (traces/metrics)

Sample OTEL Config YAML

Kubernetes/Containers (OpenShift/ARO/EKS/GKE)

FluentD (lightweight log shipping + rich parsing)

Best for: Containers/AKS, edge, file/journald/syslog logs with multiline/regex parsing; routing to Event Hubs (Kafka API) → ADX.

Pattern

Deploye FluentD as Daemon set to collects logs from Kubernetes/Containers

Collect logs:Container stdout/stderr from all pods by tailing CRI log files like /var/log/containers/*.log,Kubernetes context such as pod name, namespace, labels, and annotations added to each log record via the kubernetes_metadata_filter plugin

Apply filters (multiline,

parser,modify,lua/wasmif needed).Output to Azure Event Hubs (Kafka API) for buffering and replay.

ADX ingests from the Event Hub (data connection + JSON mapping).

Network Device and Firewall Collection

A) SNMP (device health & performance)

Azure Monitor does not poll SNMP on your behalf. Use one of:

Prometheus SNMP Exporter adjacent to devices → OTel Collector

prometheusreceiver → export to Azure Monitor metrics or ADX.OTel Collector

snmpreceiver (contrib) to poll SNMP v3 directly → export as metrics.

Always prefer SNMP v3 (auth+priv), restrict to a management network, and standardize on MIBs + targets.yaml.trics

B) Syslog (recommended primary path)

Configure devices to send syslog (prefer TCP/TLS if supported, else UDP) to a collector VM.

Run rsyslog/syslog‑ng on the VM to receive and write messages to files (e.g., per‑device/per‑program).

Choose one of:

Azure Monitor Agent (AMA): Create a DCR (Syslog data source) to read the local syslog files and route to Log Analytics (and/or ADX via export).

Fluent Bit: Use the

sysloginput to receive logs directly (UDP/TCP), apply parsing/enrichment, and forward to Event Hubs (Kafka API), ADX, or Blob/ADLS.OTel Collector: Use the

syslogreceiver(contrib) to ingest syslog, then process and export to Event Hubs, Azure Monitor, or ADX.

C) API-based collection (for vendors that expose logs/telemetry over REST/stream)

Azure Functions or Logic Apps to poll or stream vendor APIs (pagination, throttling, retries).

Normalize to a common schema (time, device, severity, category, message, properties) and emit to Event Hubs or ADX.

Data Transformation:

Log transformation should be done at two parts, One at Edge layer (Agents ) & another at Log store i.e. Azure Data explorer.

a)Agents: Do coarse-grained noise reduction, basic parsing, minimal sensitive-data removal, and light enrichment at the edge to reduce volume/cost and prevent sensitive content from leaving hosts or clusters

b)ADX: Do schema shaping, deep parsing, normalization, advanced masking, joins with reference data, routing, and lifecycle transformations in ADX using ingestion-time update policies and KQL-based pipelines for durable, consistent transformations at scale

Normalization, PII data masking, Sesitive Data eradication, Enrcihment, Log Labelling

Log Filters:

OTel Collector can parse raw log lines into structured fields (like time and severity) using regex-based parsing so filters and routing can act on real fields instead of brittle string matches.It can then drop unwanted records at the edge (for example, anything containing the word “secret”) using a filter processor so those logs never leave the node or hit storage.Fluent Bit tails log files and applies a JSON parser so unstructured lines become structured records, which makes filtering, routing, and indexing far more accurate and efficient.It uses the grep filter to exclude noisy patterns early (for example, health checks or verbose debug lines), cutting costs and noise before data leaves the host

AMA lets data collection rules select by severity and XPath so only meaningful event IDs and levels are sent, reducing volume at the sources.DCR (KQL) can then add new fields, reshape columns, and redact or remove sensitive content before it reaches the workspace,

Log Parsing

OTel’s filelog receiver parses unstructured lines into structured fields using operators such as regex_parser,

After parsing at the receiver, transformations can further standardize fields (for example, aligning severity) with OTTL in the transform processor when needed for normalization and routing logicFluent Bit parses at the input using Parsers (JSON or Regex) so filters and outputs operate on structured keys like time, level, and message rather than brittle substring matches

AMA doesn’t define regex/JSON parsers like agents; instead, parsing is done in Data Collection Rule “transformations” with KQL functions such as parse_json, extract, split, extend, and project before data lands in Log Analytics

Data masking & Eradiction:

OTel Collector masks logs using the transform processor and OTTL: redact substrings with regex, delete sensitive attributes, or do deterministic hashing (e.g., SHA256) so correlation is possible without leaking raw values, all inline in the logs context before export.e: redact card-like patterns, delete auth tokens, and hash emails at source with OTTL

Fluent Bit masks by removing keys or entire value fields with the modify and record_modifier filters, and uses the Lua filter for partial redaction within strings when regex replacement is needed, enabling precise field-level or substring masking at high throughput on the edge

eg: delete sensitive keys (e.g., password, token) or allowlist only safe keys, add placeholders, and apply custom redaction logic via Lua to mask substrings like emails, IPs, SSNs, or card numbers across any fields in a record.AMA(Azure Monitor Agent) applies masking by not projecting them, set sensitive fields to constants like “redacted”, and perform pattern-based rewrites using supported KQL operators; note that not all KQL string functions are available in DCR transforms, so rely on the documented supported set and test in the DCR editor

eg: remove secrets and redact card-like strings with a supported replace form and projection in a DCR transformation querySample RAW logs which includes Noise, PII Data and Secret/password

{"time":"2025-08-31T16:40:01Z","level":"DEBUG","service":"payments","log":"healthcheck ok"} {"time":"2025-08-31T16:40:02Z","level":"VERBOSE","service":"cart","log":"retrying request"} {"time":"2025-08-31T16:40:03Z","level":"INFO","service":"orders","log":"pay 4111-1111-1111-1111 PAN AAAPL1234C Aadhaar 3675 9834 6012"} {"time":"2025-08-31T16:40:04Z","level":"WARN","service":"auth","Authorization":"Bearer eyJhbGciOiJIUzI1NiIsInR5cCI6IkpXVCJ9.eyJ1c2VyIjoiYWJjIn0.RxYb2V7o0wTF3aoRkFkh7y8H0Pr1dI3o1gqTQc2y7yY","password":"P@ssw0rd!","log":"login failed"} 2025-08-31T16:40:01Z DEBUG payments: healthcheck ok 2025-08-31T16:40:02Z VERBOSE cart: retrying request 2025-08-31T16:40:03Z INFO orders: pay 4111-1111-1111-1111 PAN AAAPL1234C Aadhaar 3675 9834 6012 2025-08-31T16:40:04Z WARN auth: login failed password="P@ssw0rd!" Authorization="Bearer eyJhbGciOiJIUzI1NiIsInR5cCI6IkpXVCJ9.eyJ1c2VyIjoiYWJjIn0.RxYb2V7o0wTF3aoRkFkh7y8H0Pr1dI3o1gqTQc2y7yY"OTEL Sample YAML Config based on RAW logs

receivers: filelog/app: include: [/var/log/app/*.log] start_at: beginning operators: - type: regex_parser regex: '^(?P<ts>[^ ]+) (?P<level>[A-Z]+) (?P<service>[^:]+): (?P<body>.*)$' timestamp: parse_from: attributes.ts layout: '%Y-%m-%dT%H:%M:%S%z' severity: parse_from: attributes.level processors: filter/logs: logs: log_record: - 'attributes["level"] == "DEBUG" or attributes["level"] == "VERBOSE"' - 'IsMatch(body, "(?i)healthcheck")' - 'IsMatch(body, "(?i)password=")' - 'IsMatch(body, "(?i)authorization=")' transform/logs: log_statements: - context: log statements: # Redact JWT (JWS/JWE) - set(body, ReplaceAllMatches(body, "(?:ey[A-Za-z0-9-_]+\\.){2,4}[A-Za-z0-9-_]+", "JWT_REDACTED")) # Redact credit cards (common 4-4-4-4 or 16-digits with separators) - set(body, ReplaceAllMatches(body, "((?:\\d{4}[-\\s]*){3}\\d{4})", "****-****-****-****")) # Redact PAN (AAAAA9999A) - set(body, ReplaceAllMatches(body, "\\b[A-Z]{5}[0-9]{4}[A-Z]\\b", "PAN_REDACTED")) # Redact Aadhaar (spaced and unspaced; starts 2-9) - set(body, ReplaceAllMatches(body, "\\b[2-9][0-9]{3}\\s[0-9]{4}\\s[0-9]{4}\\b", "AADHAAR_REDACTED")) - set(body, ReplaceAllMatches(body, "\\b[2-9][0-9]{11}\\b", "AADHAAR_REDACTED")) exporters: otlphttp: endpoint: https://collector.example/v1/logs service: pipelines: logs: receivers: [filelog/app] processors: [filter/logs, transform/logs] exporters: [otlphttp]Sample Fluenbit YAML Config for RAW logs

[PARSER]

Name app_json

Format json

[INPUT]

Name tail

Path /var/log/app/app.jsonl

Parser app_json

Tag app.logs

# Filter out DEBUG/VERBOSE and healthchecks

[FILTER]

Name grep

Match app.logs

Exclude level ^(DEBUG|VERBOSE)$

[FILTER]

Name grep

Match app.logs

Exclude log healthcheck

# Eradicate secret-bearing keys

[FILTER]

Name modify

Match app.logs

Remove password

Remove authorization

Remove access_token

Remove refresh_token

Add pii_masked true

# Redact JWT, Credit Cards, PAN, Aadhaar in strings

[FILTER]

Name lua

Match app.logs

Script redact.lua

Call filter

[OUTPUT]

Name azure_kusto

Match *

# --- ADX connection ---

Ingestion_Endpoint https://ingest-adx-log-store-01.eastus2.kusto.windows.net

Database_Name log_store

Table_Name FluentBitLogs

# --- Auth (choose ONE) ---

Tenant_Id 89b26e60-021d-43d4-8e47-b0bb496a95a4

Client_Id 93e81a16-d997-4027-93e0-5fc660ceb022

Client_Secret xxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxx

# --- Reliability / performance ---

buffering_enabled On

buffer_dir C:\ProgramData\fluent-bit\buffer

upload_timeout 2m

upload_file_size 150M

store_dir_limit_size 8GB

compression_enabled On

- Sample Azure Monitor Agent DCR rule to Filter,Parse based on RAW logs text

source

// Filter out noise (DEBUG/VERBOSE/healthcheck)

| where tolower(tostring(severity)) !in ("debug","verbose")

| where not(contains(tostring(message), "healthcheck"))

// Remove secret-bearing columns

| project-away password, authorization, access_token, refresh_token, id_token

// Redact JWT, credit cards, PAN, Aadhaar inside message

| extend m = tostring(message)

| extend m = replace_regex(m, @"(ey[\w\-_]+\.){2,4}[\w\-_]+", "JWT_REDACTED")

| extend m = replace_regex(m, @"((\d{4}[-\s]*){3}\d{4})", "****-****-****-****")

| extend m = replace_regex(m, @"\b[A-Z]{5}[0-9]{4}[A-Z]\b", "PAN_REDACTED")

| extend m = replace_regex(m, @"\b[2-9][0-9]{3}\s[0-9]{4}\s[0-9]{4}\b", "AADHAAR_REDACTED")

| extend m = replace_regex(m, @"\b[2-9][0-9]{11}\b", "AADHAAR_REDACTED")

// Final schema

| project TimeGenerated = todatetime(time), Severity = tostring(severity), Message = m

Sorage & Retention

Hot

Where it is: Local SSD on ADX engine nodes

Control :Caching policy (pin the last N days in cache)

Purpose:Low‑latency dashboards and investigations on recent data

Notes: Data is still persisted to Azure Storage; hot is a performance cache.

Warm

Where it is: ADX internal extents stored on Azure Storage (not pinned to SSD)

Control:Retention policy (soft‑delete window, plus recoverability option for 14‑day post‑delete recovery)

purpose: Historical analytics that you still want inside ADX for fast access without keeping everything in SSD

Notes: Great for 30–180‑day investigative horizons, then let retention reclaim space.

Cold

Where it is: External ADLS/Blob Store Attached to ADX cluster

How you control it:Continuous/periodic export from ADX; define external tables for on‑demand queries; keep storage with immutability/lifecycle rules if required

Typical purpose:Long‑term, low‑cost retention; query on demand or restore a slice back to ADX for hot performance

Lifecycle Policies

Caching & Retention polciies control data movement across different tier from Hot to Cold storage

Ingest: Agents send sanitized logs to ADX CuratedLogs with ingestion mappings and update policies (already in place from the edge work), so operational queries run fast on hot/warm tiers.

Warm: Cache policy keeps the freshest 15 days “hot,” while older data stays “warm” and is still queryable from the cluster

Archive: Continuous export streams the same data to ADLS in Parquet for long‑term retention; after 90 days, ADX retention deletes aged extents, but archived data remains queryable via external tables, or can be rehydrated for high‑performance needs

Example:

Hot → warm → archive timeline

Days 0–15: Data is “hot” in local SSD due to the caching policy and is immediately queryable at the lowest latency

Days 16–90: Data cools to “warm”; it’s still in the cluster’s reliable storage and fully queryable, just less likely to be in SSD unless a hot window is defined for targeted historical ranges.

After 90 days: Extents age out per retention policy; by then, the continuous export has already landed Parquet copies in ADLS, so the same data can be queried in place via external tables or used for offline analytics pipelines

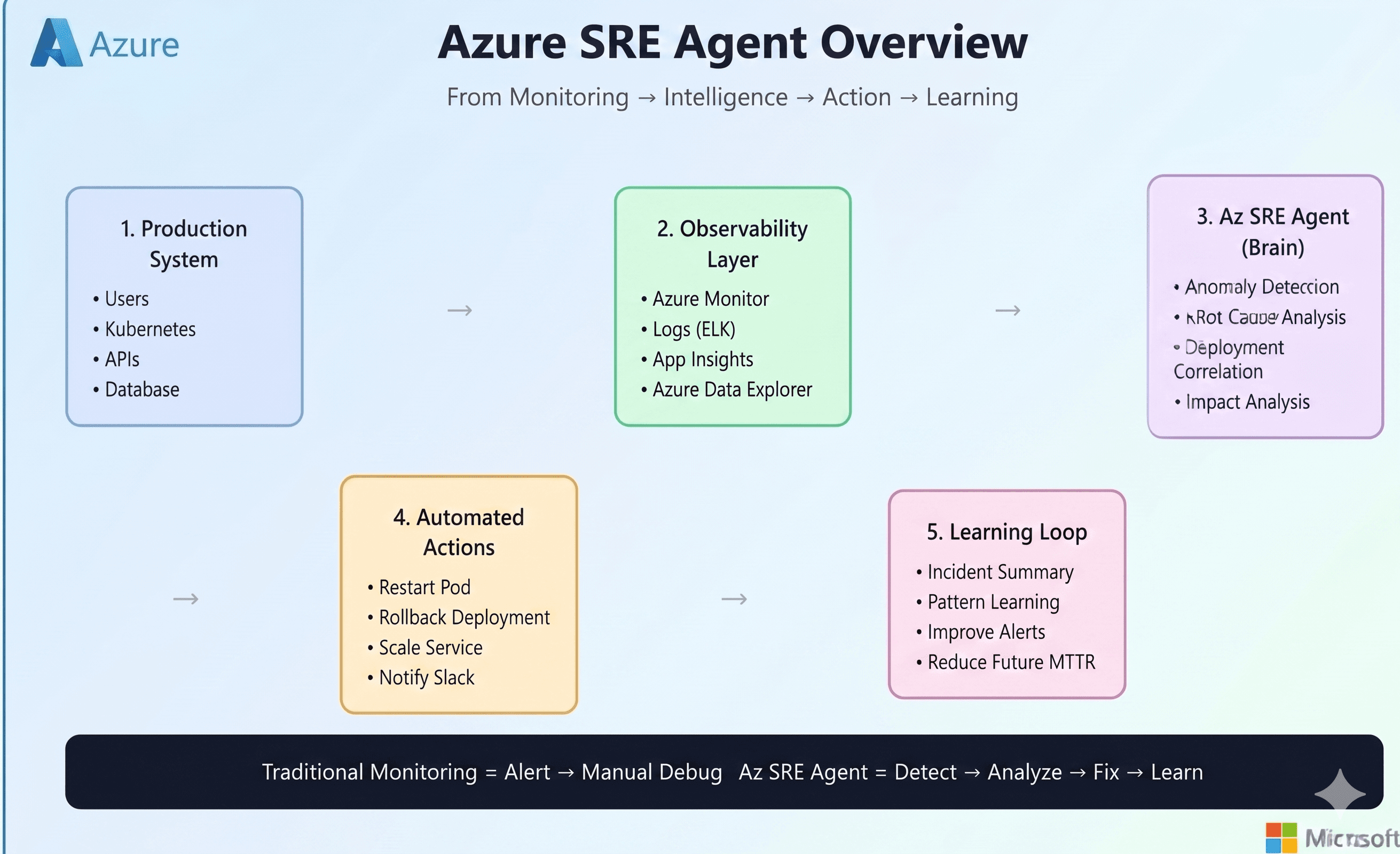

Analytics & AIOps

I have ingested two sample application logs into ADX with name ‘Java‘ & dotnet . Here is easy to build dashbaord which depicts status of Applications logs

//Check distribution of each log type

appLogs

| summarize count() by log_type

| render piechart

//Error logs over time

appLogs

| where level == "ERROR"

| summarize count() by level, bin(timestamp,1m)

| render timechart

//Check distribution of each log type

appLogs

| summarize count() by log_type, bin(timestamp,5m)

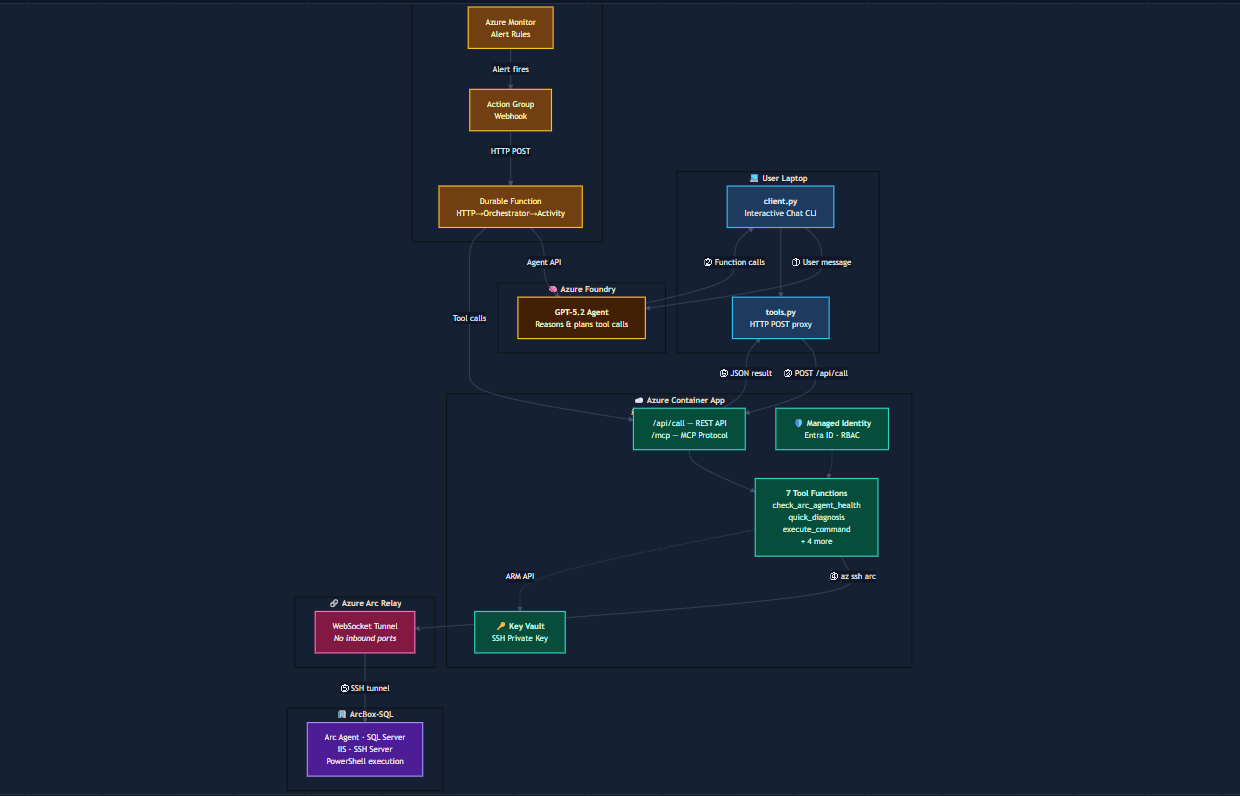

Azure MCP/Data Agents in ADX:

The Azure MCP Server implements a full open-source MCP server integration that supports natural language queries while dynamically discovering schemas and metadata from ADX resources. This capability enables seamless interaction with log lakes and data repositories without requiring prior KQL knowledge, making data exploration accessible to a broader range of users including developers, analysts, and business stakeholder

Example Use Case

Natural Language Question:

"I want to know about trends observed in ADX from my sample application (Java/DotNet) logs."

The MCP server translates this query into appropriate KQL statements, executes them against the specified ADX database, and returns trend analysis results—all without the user writing a single line of KQL code.

Example Use Case:

Root Cause Analysis:

Asking to perform RCA of an error observed in Apps logs and recommend possible next set of actions

Security & Compliance(RBAC, Encryption, Connectivity, RLS)

RBAC for ADX:

Permission Hierarchy

Cluster Scope provides organization-wide control with roles for all databases (Admin, Viewer, Monitor) and cluster management operations.

Database Scope offers granular access through Admin (full control), User (query and create entities), Viewer (read-only queries), Ingestor (data ingestion), Monitor (metadata viewing), and Management (permission administration) roles.

Table Scope restricts permissions to specific tables with Admin (full control), Ingestor (data ingestion), and Management (role assignment) capabilities.

External Table Scope manages permissions for external data sources, requiring Database User or Viewer roles as prerequisites, with Admin and Management roles available.

Materialized View Scope controls access to specific views, requiring Database User or Table Admin permissions, with Admin (alter/delete/delegate) and Management (role assignment) roles.

Row-Level Security enables data filtering based on user identity, restricting query results to authorized rows within tables.

All role assignments are managed through Kusto management commands, with higher-level roles (cluster/database) typically granting access to lower-level resources within their scope.

Network Connectivity:

Public Connectivity

ADX clusters can be accessed over the public internet using a public endpoint (URL), allowing users with valid identities to connect from any location if public access is enabled.

Administrators can restrict public accessibility using IP address filtering, specifying allowed IP addresses, service tags, or CIDR ranges in the Azure portal

Private Connectivity

ADX offers private endpoint integration using Azure Private Link, which places the cluster within a designated virtual network and assigns a private IP address for secure, internal connectivity.

Clients on the same virtual network connect seamlessly via private endpoints using standard connection strings; DNS resolution routes traffic internally, isolating access from the public internet

Encryption

ADX data stored in Azure Storage is automatically encrypted by default using Microsoft-managed keys at the service level.

Double encryption can optionally be enabled, adding infrastructure-level encryption on top of service-level encryption using separate algorithms and keys. This dual-layer approach protects against scenarios where one encryption algorithm or key becomes compromised

Customer-Managed Keys: Organizations requiring additional control can configure customer-managed keys stored in Azure Key Vault instead of Microsoft-managed keys. This option provides full control over key lifecycle operations including creation, rotation, disabling, and revocation, with audit capabilities for compliance requirements. CMK is configured at the cluster level and requires Azure Key Vault integration

Cost Controls

Understading what drives ADX Cost

Storage duration: The longer you store data, the higher the cost. Each table and materialized view has a retention policy that defines how long the data is kept.

High CPU usage: Actions like heavy queries, data processing, or transformations cause high CPU usage.

Cache settings: Caching more data boosts performance but can increase costs. caching policy allows you to choose which data should be cached. You can differentiate between hot data cache and cold data cache by setting a caching policy on hot data. Hot data is kept in local SSD storage for faster query performance, while cold data is stored in object storage

Cold data usage: Queries that access cold data trigger read transactions and add to cost.

Data transformation and optimization: Features like Update Policies, Materialized Views, and Partitioning consume CPU resources and can raise cost.

Ingestion volume: Clusters operate more cost-effectively at higher ingestion volumes.clusters with higher cost per GB are less common and usually have lower ingestion volumes. In general, smaller data volumes mean higher cost per GB.

Schema design: Wide tables with many columns need more compute and storage resources, which raises costs.

Advanced features: Options like followers, private endpoints, and Python sandboxes consume more resources and can add to cost.

Conclusion:

A centralized log store is no longer a “nice to have” for large enterprises—especially in financial services. When daily volumes cross 100TB and logs span everything from Windows/Linux to legacy Unix variants, appliances, and multiple clouds, decentralised tooling quickly becomes costly, noisy, and operationally brittle. What enterprises need instead is a single, governed observability plane: one place to ingest at scale, normalize, secure, retain, and analyze logs consistently across the entire estate.

The architecture outlined in this post addresses that reality by combining flexible collection options (AMA, Fluent Bit, OpenTelemetry, syslog collectors, and batch ingestion) with a scalable analytics engine. Azure Data Explorer is purpose-built for high-volume ingestion and fast interactive querying across structured, semi-structured, and free-text data, and it supports both streaming and batch ingestion patterns,making it a strong foundation for enterprise-wide log analytics.

Equally important, the solution treats governance and cost as first-class design goals. By performing coarse filtering + sensitive-data redaction at the edge, and reserving deep parsing/normalization and durable transformations in the log store, you reduce noise, shrink spend, and ensure sensitive data is controlled before it spreads. On the retention side, tiering logs through hot/warm and into low-cost archive with export-based lifecycle keeps recent investigations fast while meeting long retention requirements.

Finally, once logs are centralized and curated, the platform becomes more than storage, it becomes an enabler for security investigations, compliance reporting, troubleshooting, and AIOps (correlation, anomaly detection, and faster RCA). The net outcome is a standardized, scalable, and secure logging strategy that can evolve over time without rewriting every collector whenever backend analytics or storage choices change